Ever wondered how attackers can sneak into systems by just messing with the timing of things? It’s all about race conditions. These aren’t your typical software bugs; they pop up when multiple processes or threads try to access the same piece of data at the same time, and the outcome depends on who gets there first. Understanding race condition exploitation is key to spotting and stopping these sneaky attacks before they cause real damage.

Key Takeaways

- Race conditions happen when the order of operations matters, and attackers can exploit this timing to gain unauthorized access or control.

- A common way to exploit race conditions is through Time-of-Check to Time-of-Use (TOCTOU) attacks, where an attacker intercepts a check and changes the data before it’s used.

- These exploits can lead to serious issues like unauthorized data changes, gaining higher system privileges, or even making services unavailable.

- Preventing race conditions involves careful programming, like using locks to make sure only one process accesses shared data at a time.

- Tools for finding race conditions include static code analysis and dynamic testing, helping developers catch these bugs early.

Understanding Race Condition Exploitation

Race conditions are a bit like trying to get two people to use the same bathroom at the exact same time – chaos often ensues. In computing, this happens when the outcome of a process depends on the unpredictable timing of multiple threads or processes accessing shared data. It’s not about malicious intent initially, but rather a flaw in how concurrent operations are managed. The core issue is that operations expected to happen in a specific order can get interleaved in unexpected ways.

Defining Race Conditions in Computing

A race condition occurs when two or more threads or processes access a shared resource, and at least one of them modifies it. The final state of the resource depends on the precise order in which the threads execute their operations. Because operating systems schedule threads and processes in ways that aren’t strictly predictable, the sequence of operations can vary from one execution to the next. This makes race conditions notoriously difficult to reproduce and debug.

Think about a simple bank account balance. If two people try to withdraw money simultaneously, and the system checks the balance, debits it, and then updates it sequentially for each person, a race condition could allow both withdrawals to succeed even if there isn’t enough money. The system might check the balance, see enough funds for the first withdrawal, then before it updates the balance, it checks again for the second withdrawal (which also appears to have enough funds), and then both are processed.

The Exploitation Lifecycle of Race Conditions

Exploiting a race condition typically follows a pattern:

- Discovery: Identifying a shared resource and a sequence of operations that are not atomic. This often involves code review or dynamic analysis.

- Triggering: Crafting specific inputs or timing conditions to force the interleaving of operations in a way that benefits the attacker.

- Exploitation: Executing the exploit to achieve a desired outcome, such as unauthorized access, data modification, or privilege escalation.

- Verification: Confirming that the exploit was successful and the intended malicious state has been achieved.

This lifecycle isn’t always linear, and attackers might iterate through these stages multiple times. The timing is everything here; a successful exploit often relies on hitting a very narrow window of opportunity.

Common Scenarios for Race Condition Vulnerabilities

Race conditions can pop up in many places, but some scenarios are more common:

- File System Operations: When multiple processes try to create, delete, or modify files simultaneously. For example, checking if a file exists and then creating it can be vulnerable if another process creates it between the check and the creation.

- Database Transactions: While databases often have built-in locking mechanisms, poorly designed transactions can still lead to race conditions.

- Memory Management: In complex memory allocation or deallocation routines, especially in multi-threaded environments.

- Network Communication: Handling incoming requests or managing shared network buffers where timing can affect data processing.

Understanding these scenarios helps in identifying potential weaknesses before they can be exploited.

Attack Vectors for Race Condition Exploitation

Race conditions, while often subtle, can be exploited through several common attack vectors. Understanding these pathways is key to defending against them. Attackers look for opportunities where the timing of operations can be manipulated to achieve an unintended outcome.

Exploiting Concurrency in Multi-threaded Applications

Multi-threaded applications, by their very nature, involve multiple threads of execution running concurrently. This concurrency is a prime breeding ground for race conditions. When threads access shared resources without proper synchronization, an attacker can try to influence the order of operations. This might involve sending specific inputs or triggering actions at precise moments to cause a thread to read data after it’s been modified by another thread, or to perform an action based on a state that’s no longer valid.

- Timing Attacks: Manipulating the timing of thread execution to force a specific interleaving of operations.

- Resource Starvation: Overloading a system with requests to make certain threads execute slower, increasing the chance of a race condition.

- Exploiting Locks: If locks or mutexes are implemented incorrectly, an attacker might find ways to bypass them or cause deadlocks that lead to exploitable states.

The core idea here is that the attacker isn’t necessarily finding a flaw in the code’s logic itself, but rather exploiting the environment in which the code runs – specifically, the unpredictable nature of concurrent execution.

Leveraging Timing Differences in Networked Systems

Networked systems introduce another layer of complexity due to inherent latency and variable network conditions. Attackers can exploit these timing differences. For instance, in a client-server interaction, an attacker might try to send requests in a way that causes the server to process them out of the intended order. This is particularly relevant in systems where multiple requests from the same client are processed concurrently or where responses are queued.

- Replay Attacks: Capturing and replaying network packets to manipulate the sequence of operations on the server.

- Packet Manipulation: Modifying packet timing or order to influence how a server processes requests.

- Exploiting Asynchronous Operations: Targeting systems that handle requests asynchronously, where the order of completion is not guaranteed.

Race Conditions in File System Operations

File system operations are a classic area where race conditions can occur, especially in multi-user or multi-process environments. When multiple processes or threads try to access or modify the same file simultaneously, without proper locking, unexpected behavior can result. This is often seen in temporary file creation, file deletion, or permission changes.

- TOCTOU (Time-of-Check to Time-of-Use): This is a very common pattern. A program checks if a file exists or has certain permissions, and then later uses that file. An attacker can change the file between the check and the use, leading to exploitation. For example, checking if a file is a symbolic link and then using it, while an attacker swaps it out for a sensitive file.

- Atomic Operations: Many file system operations are not inherently atomic. If an operation is interrupted or interleaved with another, it can leave the file system in an inconsistent state.

- Shared File Access: Multiple applications or services accessing the same configuration or data file can lead to race conditions if not properly synchronized.

| Scenario | Vulnerable Operation Example | Potential Impact |

|---|---|---|

| Temporary File Creation | mktemp() followed by writing to the created file |

Symbolic link attack, overwriting sensitive files |

| File Deletion | Checking for file existence before deleting | Race to replace deleted file with malicious content |

| Permission Modification | Checking permissions before writing, then writing | Unauthorized data modification or access |

Techniques in Race Condition Exploitation

Race conditions are tricky because they often pop up when multiple parts of a program try to access the same data at the same time. Exploiting them means finding ways to mess with the timing of these operations to get an outcome the programmer didn’t intend. It’s like trying to grab a cookie from a jar when someone else is also reaching for it – whoever gets there just right can snatch it.

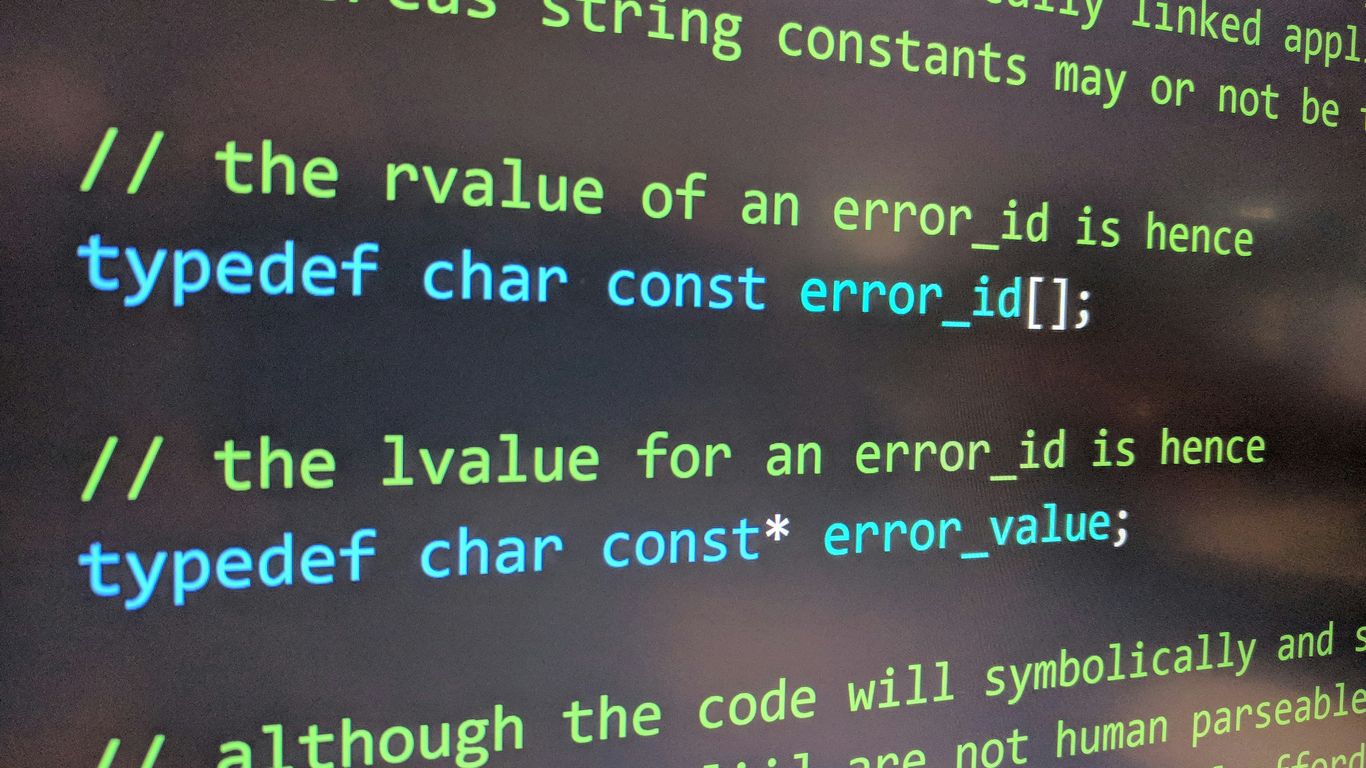

Time-of-Check to Time-of-Use (TOCTOU) Exploits

This is a classic. A TOCTOU exploit happens when a program checks something (like if a file exists or if a user has permission) and then, before it actually uses that information, the state of the system changes. An attacker can slip in during that tiny window between the check and the use to make the program do something it shouldn’t. For example, imagine a program checks if a user can write to a file. If an attacker can quickly replace that file with a symbolic link to a sensitive system file after the check but before the write operation, they might end up overwriting critical data.

- The core idea is a gap between verification and action.

- This gap can be exploited by manipulating the shared resource.

- Common targets include file system operations and permission checks.

The window of opportunity in TOCTOU attacks is incredibly small, often measured in microseconds. Successful exploitation relies on precise timing and understanding the system’s internal operations.

Atomic Operation Manipulation

Some operations are supposed to be atomic, meaning they happen all at once, without interruption. If you can find a way to break that atomicity or trick the system into thinking an operation is atomic when it’s not, you can create a race condition. This might involve interrupting a multi-step process that should be atomic or finding a flaw in how the system guarantees atomicity. For instance, if a database transaction that’s supposed to be atomic is implemented poorly, an attacker might be able to observe intermediate states or cause partial updates that lead to inconsistencies.

Exploiting Shared Resource Access

This is the bread and butter of race condition exploitation. Whenever multiple threads or processes share access to memory, files, network sockets, or any other resource, there’s potential for a race. Attackers look for scenarios where the order of operations matters. This could be:

- Data Corruption: Two processes try to update the same variable, and the final value depends on which one finishes last.

- Information Leakage: One process reads data while another is modifying it, leading to inconsistent or sensitive information being exposed.

- Denial of Service: Repeatedly triggering race conditions can exhaust system resources or cause crashes.

Understanding how these shared resources are managed is key. For example, in a multi-threaded web server, if multiple threads handle incoming requests and share a cache, a race condition could allow an attacker to manipulate cache entries to serve incorrect data or even execute malicious code. This is why proper synchronization, like using mutexes or semaphores, is so important for preventing race conditions.

| Scenario | Vulnerability Type | Potential Impact |

|---|---|---|

| File access control | TOCTOU | Unauthorized file modification or deletion |

| Shared memory updates | Concurrency | Data corruption, incorrect program state |

| Database transactions | Atomicity | Inconsistent data, financial fraud |

| Network connection handling | Resource pooling | Denial of Service, information disclosure |

Impact of Race Condition Exploitation

When race conditions aren’t properly handled, the consequences can range from annoying glitches to serious security breaches. It’s not just about a program crashing; it’s about what an attacker can do with that instability.

Unauthorized Data Access and Modification

One of the most direct impacts is the ability for an attacker to access or change data they shouldn’t. Imagine a banking application where a race condition allows a user to initiate two withdrawal requests almost simultaneously. If the system checks the balance only once before processing both, it might incorrectly allow both withdrawals, even if the initial balance wasn’t sufficient for both. This isn’t just a bug; it’s a way to steal money. Similarly, sensitive user information could be read or altered if access controls are bypassed due to timing issues.

Privilege Escalation Through Race Conditions

This is where things get really serious. Attackers can use race conditions to gain higher levels of access than they’re supposed to have. For example, a process might check if a user has permission to perform an action, and then, before the action is completed, a race condition allows the attacker to change the user’s identity or permissions. The original check passes, but the action is then performed with elevated privileges. This can lead to full system compromise.

- Exploiting file access permissions: An attacker might race to create or modify a file just after a privileged process checks its existence but before it performs an operation on it.

- Bypassing authentication checks: If authentication is handled with a race condition, an attacker might trick the system into thinking they are logged in with higher privileges.

- Manipulating system configurations: Gaining temporary elevated access can allow an attacker to alter critical system settings.

Denial of Service via Race Condition Exploits

While not always about gaining access, race conditions can also be used to make systems unavailable. By triggering a race condition that leads to a crash, an infinite loop, or resource exhaustion, an attacker can effectively shut down a service. This is a denial-of-service (DoS) attack. For critical infrastructure or online services, even a short period of unavailability can have significant financial and reputational costs.

The core problem with race conditions is that they introduce unpredictability into system behavior. This unpredictability is precisely what attackers look for to subvert intended security controls and operational logic.

Real-World Race Condition Exploitation Examples

Looking at how race conditions have played out in the wild can really drive home why they’re such a big deal. It’s not just theoretical; these flaws have led to some pretty serious security incidents.

Case Studies of Exploited Concurrency Flaws

We’ve seen race conditions pop up in all sorts of places. One common area is in systems that handle file operations. Imagine a web server trying to delete a temporary file while another process is trying to read from it. If the timing is just right, the delete operation might happen after the read starts but before it finishes, potentially corrupting data or causing a crash. It sounds simple, but these kinds of timing issues can be tricky to spot during development.

Another classic example involves privilege escalation. Sometimes, a program might check if a user has permission to do something, and then later perform the action. If an attacker can change the conditions between the check and the action – maybe by manipulating a file’s permissions or ownership – they could trick the program into performing an action it shouldn’t. This is often referred to as a Time-of-Check to Time-of-Use (TOCTOU) vulnerability, and it’s a direct consequence of race conditions. Exploiting these can lead to attackers gaining higher-level control over systems, which is a major win for them. You can read more about how attackers achieve privilege escalation through race conditions.

Impact on Critical Infrastructure and Services

When race conditions affect systems that underpin critical infrastructure or widely used services, the impact can be widespread. Think about financial systems, power grids, or even large-scale cloud platforms. A race condition in a payment processing system, for instance, could potentially allow for duplicate transactions or incorrect balance updates. In cloud environments, a flaw in how resources are allocated or deallocated could lead to service disruptions or unauthorized access to data belonging to other tenants. The interconnected nature of modern systems means a single race condition can have ripple effects.

Lessons Learned from Past Exploitations

What can we take away from these incidents? Firstly, it highlights the absolute necessity of robust synchronization mechanisms. Relying on developers to manually manage timing is a recipe for disaster. Using tools like mutexes, semaphores, and atomic operations correctly is non-negotiable when dealing with shared resources. Secondly, thorough testing is key. This includes not just functional testing but also specialized concurrency testing and fuzzing to uncover these elusive timing-dependent bugs. Finally, secure coding practices need to be ingrained in development culture. Developers need to be aware of potential race conditions and how to avoid them from the outset. Understanding common attack vectors, like those used in web application attacks, can also inform defensive strategies.

Mitigation Strategies for Race Conditions

Race conditions can be tricky to spot and even trickier to fix. They pop up when multiple threads or processes try to access shared data at the same time, and the final outcome depends on the exact order of operations, which isn’t always predictable. This can lead to some really weird bugs that are hard to reproduce and even harder to squash. Thankfully, there are established ways to keep these issues at bay.

Implementing Proper Synchronization Mechanisms

This is probably the most common way to deal with race conditions. The idea is to control access to shared resources so that only one thread can modify them at any given moment. Think of it like a single-person bathroom – only one person can use it at a time, and everyone else has to wait their turn. This prevents the chaotic scramble that leads to race conditions.

- Mutexes (Mutual Exclusion Locks): These are like a token. A thread that wants to access a shared resource must first acquire the mutex. If the mutex is already held by another thread, the requesting thread has to wait. Once it’s done with the resource, it releases the mutex, allowing another waiting thread to proceed. This is a very direct way to enforce exclusive access.

- Semaphores: Semaphores are a bit more flexible than mutexes. They manage access to a pool of resources. A semaphore has a counter. Threads can acquire a permit from the semaphore (decrementing the counter) to access a resource. If the counter is zero, threads have to wait. When a thread is done, it releases the permit (incrementing the counter). This is useful when you have a limited number of identical resources, like database connections.

- Monitors: These are higher-level constructs that combine data with the synchronization methods that operate on that data. They often bundle mutexes and condition variables, making it easier to manage complex synchronization scenarios.

Ensuring Atomic Operations

Sometimes, you don’t need to lock an entire resource; you just need to perform a very small, specific operation without interruption. That’s where atomic operations come in. An atomic operation is one that completes entirely or not at all, from the perspective of other threads. It’s like a single, indivisible step.

- Atomic Variables: Many programming languages and libraries provide atomic data types (like atomic integers or booleans). Operations on these types are guaranteed to be atomic. For example, incrementing an atomic integer will happen as a single, uninterruptible operation, even in a multi-threaded environment.

- Compare-and-Swap (CAS): This is a common low-level atomic primitive. It checks if a memory location still holds an expected value. If it does, it updates the location with a new value. If it doesn’t (meaning another thread changed it), the operation fails, and the thread can retry. This is a building block for many lock-free data structures.

The goal here is to make sure that critical sequences of operations appear as a single, unbreakable unit to the rest of the system. This avoids the ‘interruption points’ where race conditions typically occur.

Secure Coding Practices to Prevent Race Conditions

Beyond specific synchronization tools, adopting good coding habits is key. It’s about designing your code from the ground up to minimize the chances of race conditions appearing.

- Minimize Shared Mutable State: The less data that is shared and can be changed by multiple threads, the fewer opportunities there are for race conditions. If possible, design your application so that data is either read-only or passed between threads without direct shared modification.

- Thread-Local Storage: Use thread-local storage when data is specific to a thread. This way, each thread gets its own private copy, eliminating the need for synchronization for that data.

- Immutability: Favor immutable data structures. Once created, they cannot be changed. This naturally eliminates race conditions because there’s no state to modify concurrently. You can safely share immutable objects across threads without any locking.

- Careful Design of Concurrency: Think about how threads will interact before you start coding. Consider using higher-level concurrency abstractions provided by your language or framework, which often handle the underlying synchronization details for you. For example, using message queues or actor models can simplify concurrent programming and reduce the likelihood of shared state issues. Understanding memory injection attacks can also highlight the importance of secure memory handling in concurrent systems.

- Code Reviews and Testing: Have other developers review your concurrent code. Use specialized tools designed to detect race conditions during testing. Static analysis tools can sometimes flag potential issues, while dynamic analysis and fuzzing can uncover bugs that only appear under specific timing conditions. This proactive approach is vital for catching subtle bugs before they make it into production. Exploiting a system’s root of trust often involves complex interactions, and similar principles of careful state management apply.

Tools and Technologies for Race Condition Analysis

Finding race conditions can feel like searching for a needle in a haystack, especially in complex systems. Luckily, there are tools and techniques that can help make this process a bit more manageable. These aren’t magic bullets, but they definitely point you in the right direction.

Static Analysis for Concurrency Bugs

Static analysis tools look at your code without actually running it. They scan for patterns that are known to cause concurrency issues, like improper locking or shared data access. Think of it like a spell checker for your code, but instead of grammar, it’s looking for potential race conditions. These tools can catch a lot of common mistakes early in the development cycle, which is always a good thing. Some tools can even identify potential TOCTOU vulnerabilities before they become a problem.

Dynamic Analysis and Fuzzing Techniques

Dynamic analysis, on the other hand, involves running your code and observing its behavior. Fuzzing is a type of dynamic analysis where you throw a ton of random or semi-random data at your application to see if it breaks. When it comes to race conditions, fuzzing can sometimes trigger unexpected interleavings of operations that might lead to a bug. It’s a bit like poking a complex machine with a stick to see if anything rattles loose. This approach is particularly useful for finding bugs that are hard to predict or reproduce.

Here’s a quick look at how these methods can help:

- Static Analysis: Identifies potential issues by examining code structure and patterns.

- Dynamic Analysis: Detects bugs by observing program execution under various conditions.

- Fuzzing: Generates unexpected inputs to uncover hidden flaws and edge cases.

Debugging Tools for Race Condition Detection

When you suspect a race condition, good old-fashioned debugging tools become your best friend. Modern debuggers often have features specifically designed to help with multithreaded applications. You can set breakpoints, step through code execution thread by thread, and inspect the state of shared resources. Some advanced debuggers can even help visualize thread interactions. It takes patience, but carefully stepping through the code when a race condition is suspected can often reveal the exact sequence of events that caused the problem. For instance, tools like ThreadSanitizer (TSan) are specifically built to detect data races during runtime. You can find more information on how these tools work in the context of side-channel attacks, which sometimes share similar timing-based detection principles.

Finding race conditions often requires a combination of automated tools and manual investigation. Relying on just one method might mean missing critical bugs. It’s about using the right tool for the right job and understanding the limitations of each.

Defensive Programming Against Race Conditions

So, you’ve got this code that’s supposed to run smoothly, but sometimes, things get messy because multiple parts are trying to do their thing at the exact same time. That’s where defensive programming comes in. It’s all about writing code that anticipates these kinds of timing issues and prevents them from causing trouble. Think of it like building a bridge – you don’t just throw beams together; you plan for stress, load, and potential shifts. We need to do the same with our software.

Utilizing Mutexes and Semaphores Effectively

When you have shared resources, like a piece of data or a file, that multiple threads or processes might want to access, you need a way to control who gets in and when. That’s where synchronization primitives like mutexes and semaphores shine. A mutex, short for mutual exclusion, is like a single key to a room. Only one thread can hold the key (lock the mutex) at a time. Anyone else wanting to enter has to wait until the key is returned (the mutex is unlocked). Semaphores are a bit more flexible; they’re like a counter for available resources. You can have multiple threads access a resource up to a certain limit defined by the semaphore’s count.

- Mutexes: Best for ensuring exclusive access to a single resource. If only one thread should ever be writing to a variable, a mutex is your go-to.

- Semaphores: Useful when you have a pool of resources, like database connections or worker threads, and you want to limit the number of concurrent users.

Using these incorrectly can lead to deadlocks (where threads are waiting for each other indefinitely) or livelocks (where threads keep changing state in response to each other without making progress). So, it’s important to understand their behavior and apply them thoughtfully.

Minimizing Shared Mutable State

One of the biggest culprits behind race conditions is shared mutable state. This means data that can be changed (mutable) and is accessed by more than one thread or process (shared). The more places your data can be modified, the higher the chance of a race condition popping up. A good defensive strategy is to simply reduce the amount of data that gets shared and changed. Can you make data read-only after it’s initialized? Can you pass data around instead of having everyone access a central copy? Thinking about immutability and localizing state can significantly simplify your concurrency model.

Here’s a quick breakdown:

- Immutable Data: Once created, it cannot be changed. This eliminates a whole class of race conditions because no one can alter it unexpectedly.

- Local State: Keep data within the scope of a single thread or function whenever possible. If a thread needs data, pass it as an argument rather than having it pull from a global variable.

- Copy-on-Write: For complex data structures, this technique creates a copy only when a modification is attempted, preserving the original for other readers.

The goal here isn’t to eliminate all shared state, which is often impossible, but to be deliberate about where it exists and how it’s accessed. Treat shared mutable state like a hazardous material – handle with extreme care and only when absolutely necessary.

Designing for Thread Safety

Thread safety isn’t just about adding locks; it’s a design philosophy. When you’re architecting your application, think about how different parts will interact concurrently from the start. This means considering:

- Atomicity: Can an operation be guaranteed to complete without interruption? If not, can you make it atomic using locks or specialized instructions?

- Reentrancy: Can a function be safely called multiple times by the same thread without causing issues? This is important for recursive functions or callbacks.

- Thread Locality: Can you design components so that they primarily operate on data local to their own thread, minimizing the need for inter-thread communication and synchronization?

It’s about building systems where concurrency doesn’t introduce hidden bugs. This often involves careful planning, code reviews focused on concurrency, and thorough testing under load.

Advanced Race Condition Exploitation Scenarios

While basic race conditions can be tricky, some scenarios push the boundaries of complexity, especially when dealing with distributed systems, kernel operations, and modern cloud architectures. These advanced situations often require a deeper understanding of system internals and concurrency models.

Exploiting Race Conditions in Distributed Systems

Distributed systems, by their very nature, introduce significant challenges for maintaining consistency. Multiple nodes, network latency, and asynchronous communication create fertile ground for race conditions that are harder to detect and exploit than in single-machine applications. Imagine a scenario where a distributed database needs to update a record. If two nodes try to update the same record simultaneously, and the system doesn’t have robust locking mechanisms in place, one update might overwrite the other, or worse, lead to data corruption. This can happen because the network delay means a node might not know about the other’s operation until it’s too late. Exploiting this often involves carefully timing requests to specific nodes to trigger these conflicting operations. The goal is usually to cause data inconsistency or gain unauthorized access by manipulating the state across different parts of the system. Understanding the specific consensus algorithms and communication protocols used is key here.

Race Conditions in Kernel-Level Operations

Operating system kernels are the heart of a computer system, managing hardware and providing services to applications. Exploiting race conditions at this level can have devastating consequences, often leading to privilege escalation or system crashes. These vulnerabilities might occur in how the kernel handles system calls, manages memory, or interacts with device drivers. For instance, a race condition in a system call that checks file permissions and then opens a file could allow an attacker to swap the file between the check and the open operation, gaining access to a file they shouldn’t. This is a classic Time-of-Check to Time-of-Use (TOCTOU) vulnerability, but at the kernel level, the impact is far greater. Successfully exploiting these requires deep knowledge of kernel internals and often involves writing custom exploits that can interact with the kernel at a very low level. These exploits can be used to gain administrator or root access, effectively giving the attacker full control over the system. This is a major concern for system security, as a compromised kernel can undermine all other security measures. For more on how software flaws can lead to system compromise, you can look into remote code execution vulnerabilities.

Race Conditions in Cloud-Native Architectures

Cloud-native environments, with their reliance on microservices, containers, and dynamic orchestration, present a new frontier for race condition exploitation. The ephemeral nature of containers and the complex interactions between numerous services can create subtle timing windows. For example, consider a scenario involving multiple microservices that need to access a shared configuration store. If the process for updating that configuration isn’t atomic across all services, one service might read an old configuration while another is in the middle of writing a new one. This could lead to inconsistent behavior or security bypasses. Exploiting these often involves understanding the service mesh, API gateways, and the underlying orchestration platform like Kubernetes. Attackers might try to trigger race conditions during service deployments, scaling events, or when services are communicating through shared queues or databases. The complexity of these environments means that a vulnerability in one small component could potentially cascade and affect multiple services, leading to widespread disruption or data breaches.

The Evolving Landscape of Race Condition Exploitation

The way race conditions are exploited isn’t static; it’s always changing. As systems get more complex, new opportunities for these kinds of bugs pop up. We’re seeing a shift towards more sophisticated attacks that target the intricate ways modern applications handle concurrency.

Emerging Trends in Concurrency Vulnerabilities

Attackers are getting smarter about finding and using race conditions. Instead of just looking for the obvious flaws, they’re digging into the subtle timing issues that can arise in distributed systems and microservices. Think about it: with so many moving parts, it’s easier for unexpected interactions to happen. Exploiting these complex interactions is becoming a key focus. We’re also seeing more interest in cloud-native environments, where the shared infrastructure and dynamic scaling can introduce unique concurrency challenges. It’s not just about finding a bug anymore; it’s about understanding the entire system’s behavior under stress.

The Role of AI in Race Condition Discovery

Artificial intelligence is starting to play a bigger role here, both for defenders and attackers. AI tools can sift through massive amounts of code and runtime data, looking for patterns that might indicate a race condition. This can speed up the discovery process significantly. On the flip side, attackers can use AI to automate the testing of complex systems, finding vulnerabilities that might have been missed by manual analysis. It’s a bit of an arms race, with AI helping to find bugs faster than ever before. This is changing how we approach security testing and vulnerability management.

Future Challenges in Race Condition Defense

Looking ahead, the biggest challenge will be keeping up with the pace of development. As systems become more distributed and rely on more third-party components, the potential for race conditions grows. We’ll need better tools for analyzing these complex systems, and developers will need to adopt more robust security practices from the start. The focus will likely shift towards building systems that are inherently more resilient to concurrency issues, rather than just patching them after they’re found. It’s a continuous effort to stay ahead of the curve.

Wrapping Up: Staying Ahead of Race Conditions

So, we’ve looked at how race conditions can pop up in software, sometimes in ways you wouldn’t expect. It’s not always obvious, but these little timing issues can lead to some pretty big security problems, like letting someone get more access than they should or making unauthorized changes. The key takeaway here is that building secure software means paying attention to the details, especially how different parts of the system talk to each other and when. It’s about being proactive, testing thoroughly, and keeping systems updated. Because honestly, attackers are always looking for these kinds of gaps, and we need to be just as diligent in closing them.

Frequently Asked Questions

What is a race condition in computing?

A race condition happens when two or more parts of a program try to change the same data at the same time. If the timing is just right, it can cause bugs or security problems because the program can act in ways the developer didn’t expect.

How can attackers exploit race conditions?

Attackers can take advantage of race conditions by timing their actions to get around security checks. For example, they might change something between when a program checks it and when it uses it, letting them do things they shouldn’t be able to.

What are some common situations where race conditions appear?

Race conditions often show up in programs that use threads, networked systems, or file operations. Any time two actions can happen at the same time and change the same thing, a race condition is possible.

What damage can race condition exploits cause?

Race condition exploits can let attackers read or change data they shouldn’t, gain higher-level access, or even crash systems. This can lead to data leaks, loss of control, or services being knocked offline.

How can software developers prevent race conditions?

Developers can prevent race conditions by using locks, mutexes, or semaphores to make sure only one part of a program can change important data at a time. They should also write code that checks and uses data all at once, not in separate steps.

Are there tools that help find race conditions?

Yes, there are tools that can scan code for possible race conditions. Some tools check the code without running it (static analysis), while others test the program as it runs (dynamic analysis or fuzzing). Debuggers can also help spot these bugs.

What is a Time-of-Check to Time-of-Use (TOCTOU) bug?

A TOCTOU bug happens when a program checks something, like if a file exists, but then something changes before the program uses that file. Attackers can use the time between checking and using to sneak in their own actions.

Can race conditions happen in cloud or distributed systems?

Yes, race conditions can happen in cloud and distributed systems because many computers or services might try to access the same resources at once. This makes it even more important to use good security and design practices.