Keeping digital systems safe from unwanted visitors is a big deal these days. We’re talking about intrusion detection systems, or IDS, which are like the security guards for your computer networks and individual machines. They’re designed to spot suspicious activity and let you know when something’s not right, helping to stop problems before they get out of hand.

Key Takeaways

- Intrusion detection systems (IDS) are key for spotting unwanted activity on networks and devices.

- Both network-based and host-based IDS monitor different areas for potential threats.

- Signature-based and anomaly-based methods offer different ways to identify malicious behavior.

- Advanced techniques like AI and behavioral analysis are improving how we detect threats.

- Effective alerting and integration with threat intelligence are vital for responding to detected issues.

Understanding Intrusion Detection Systems

Intrusion Detection Systems (IDS) are a key part of any cybersecurity setup. Think of them as the security cameras and alarm systems for your digital world. They’re designed to watch over your networks and systems, looking for anything suspicious or out of the ordinary that might signal a security problem. When they spot something that looks like trouble, they raise an alert, letting you know there’s a potential issue that needs checking out.

Intrusion Detection Systems Overview

At its core, an IDS is a monitoring tool. It doesn’t necessarily stop an attack in progress on its own, but it’s vital for knowing when something bad is happening. These systems can be placed in various spots within your IT infrastructure. Some focus on watching network traffic, while others keep an eye on individual computers or servers. The main goal is to provide visibility into potential threats that might have slipped past your initial defenses.

How Intrusion Detection Systems Work

IDS tools work by analyzing activity. They look for patterns that match known malicious behaviors or deviations from what’s considered normal for your environment. This analysis can happen in a couple of main ways: signature-based detection, which looks for known bad patterns, and anomaly-based detection, which flags unusual activity.

- Signature-Based Detection: This is like having a database of known viruses or attack signatures. If the system sees traffic or activity that matches a known signature, it triggers an alert. It’s great for catching common, well-known threats.

- Anomaly-Based Detection: This method first learns what

Network-Based Intrusion Detection

When we talk about network security, a big part of it is watching what’s happening on the network itself. This is where network-based intrusion detection systems (NIDS) come into play. Think of them as the security guards patrolling the hallways of your digital building, keeping an eye out for anyone who doesn’t belong or is acting suspiciously.

Network Traffic Monitoring

At its core, network traffic monitoring is all about observing the data packets that travel across your network. It’s like listening in on conversations to make sure no one is planning something bad. These systems look at things like the source and destination of the traffic, the ports being used, and the volume of data. By analyzing these patterns, they can spot unusual activity that might indicate an attack. For example, a sudden surge in traffic to a specific server from an unknown source could be a red flag.

Protocol Analysis

Beyond just watching the flow of data, NIDS also dig deeper into the protocols being used. Protocols are the sets of rules that govern how devices communicate. Attackers can sometimes exploit weaknesses in these protocols or use them in ways they weren’t intended. Protocol analysis involves checking if the traffic adheres to the expected standards for protocols like TCP/IP, HTTP, or DNS. If a packet looks like it’s trying to trick a system by bending the rules of a protocol, the NIDS can flag it.

Intrusion Detection Systems in Network Security

So, how do these systems fit into the bigger picture of network security? They act as a critical detection layer. While firewalls are great at blocking known bad traffic at the borders, NIDS are designed to spot threats that might get past those initial defenses or originate from within the network. They can identify things like:

- Malware propagation attempts

- Unauthorized access or scanning

- Denial-of-service (DoS) attacks

- Lateral movement by an attacker who has already gained a foothold

The main goal is to provide visibility into potential threats that might otherwise go unnoticed. When a NIDS detects something suspicious, it generates an alert. This alert then needs to be investigated by security personnel to determine if it’s a real threat or a false alarm. This process is key to responding quickly and minimizing any potential damage.

Host-Based Intrusion Detection

Host-based intrusion detection systems (HIDS) are like the security guards stationed inside your buildings, watching over individual rooms. Unlike network-based systems that monitor traffic flowing between buildings, HIDS focus on what’s happening on specific computers or servers. They keep an eye on system files, logs, and running processes to catch any suspicious activity that might be happening right on the device itself.

Endpoint Activity Monitoring

This is the core of HIDS. It involves constantly watching the activity on endpoints – think laptops, desktops, and servers. What are programs doing? Are new files being created or modified unexpectedly? Is there unusual network communication originating from the machine? By monitoring these things, HIDS can spot malware trying to run, unauthorized software installations, or even an attacker trying to move around within the system.

- Process Monitoring: Watching which programs are running, their parent-child relationships, and their resource usage.

- File System Monitoring: Tracking changes to critical system files and directories. Any unauthorized modification is a red flag.

- Registry Monitoring (Windows): Observing changes to the Windows Registry, which is a common target for malware and configuration tampering.

File Integrity Monitoring

File Integrity Monitoring (FIM) is a specialized part of HIDS that’s all about making sure important files haven’t been tampered with. Imagine you have a critical configuration file for your web server. FIM creates a baseline ‘fingerprint’ of that file (like a checksum or hash) and then regularly checks if the file has changed. If the fingerprint doesn’t match, it means someone or something has altered the file, which could be a sign of a compromise.

FIM is particularly useful for detecting unauthorized modifications to system files, configuration settings, and application executables. It helps ensure that the integrity of your system hasn’t been compromised by malicious actors or accidental changes.

System Log Analysis

Every computer generates a ton of logs – records of events that happen. HIDS analyze these logs to find patterns that indicate trouble. This includes things like:

- Authentication Logs: Repeated failed login attempts, logins from unusual locations or times.

- System Event Logs: Errors, warnings, or critical events that might point to a system malfunction or an attack.

- Application Logs: Unusual activity reported by specific software applications.

By correlating events across different log sources, HIDS can piece together a picture of an attack that might not be obvious from looking at just one log file. It’s like putting together puzzle pieces to see the whole image of what’s going on.

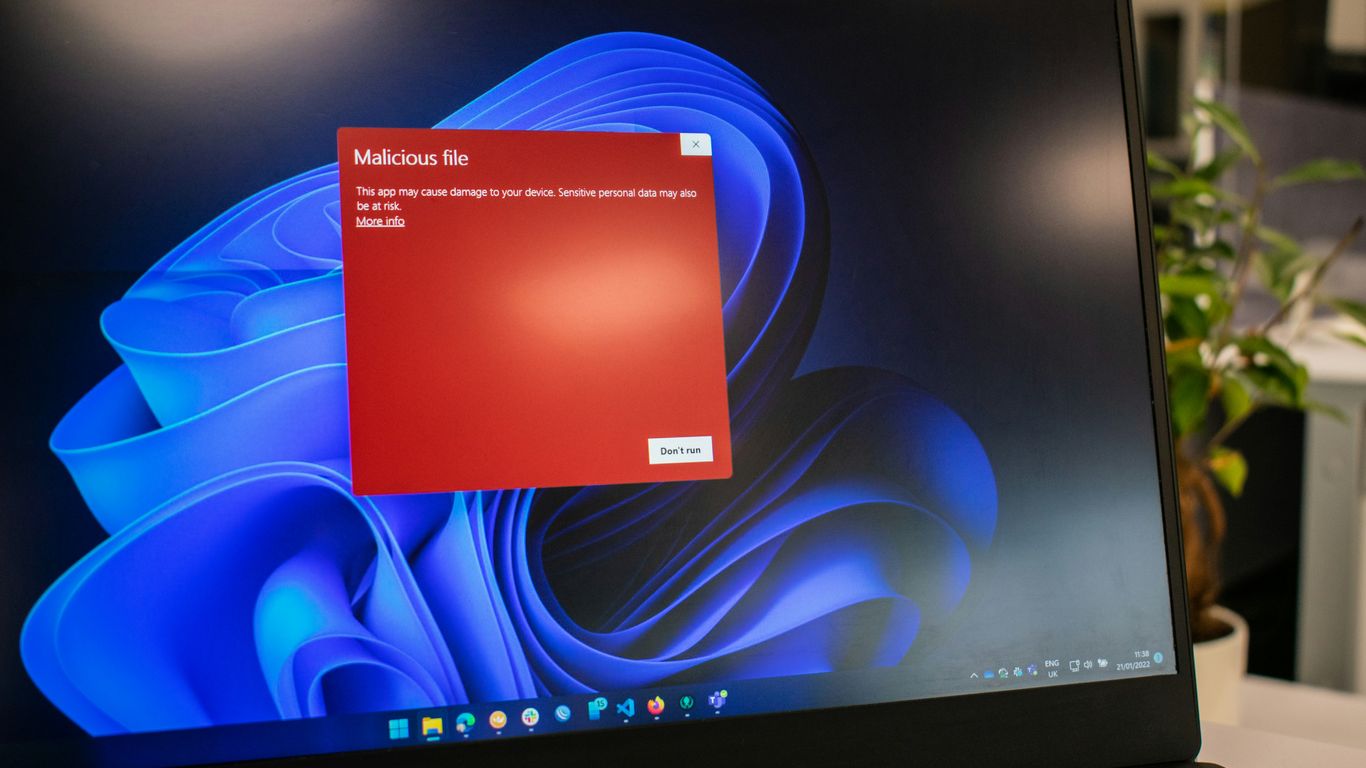

Signature-Based Detection Methods

Signature-based detection is a pretty common way security systems try to spot trouble. Think of it like a virus scanner on your computer. It works by looking for specific patterns, or "signatures," that are known to be associated with malicious software or activities. When the system finds a match, it flags it as a potential threat.

Matching Known Malicious Patterns

This method relies on a database of known threats. Security tools, like Intrusion Detection Systems (IDS) or antivirus software, compare incoming data or system activity against this database. If a piece of code, a network packet, or a command sequence exactly matches a signature in the database, it’s considered malicious. It’s really effective against threats that have already been identified and cataloged.

- Signatures can be based on file hashes, specific byte sequences in files, network traffic patterns, or even registry entries.

- The accuracy depends heavily on the quality and completeness of the signature database.

- This approach is straightforward and generally produces fewer false positives for known threats compared to other methods.

Signature Updates and Management

Because new threats pop up all the time, keeping the signature database up-to-date is super important. Security vendors regularly release updates with new signatures to cover the latest malware and attack techniques. Managing these updates involves ensuring they are deployed quickly and correctly across all your security devices. If your signatures are old, you’re basically leaving the door open for newer, uncataloged threats.

Here’s a look at the process:

- Threat Research: Security analysts identify new malware or attack patterns.

- Signature Creation: Unique identifiers (signatures) are developed for these threats.

- Distribution: Updates are pushed out to security products.

- Deployment: Systems download and apply the new signatures.

- Verification: Confirming that updates were successful and systems are protected.

Limitations of Signature-Based Detection

While useful, signature-based detection isn’t a silver bullet. Its biggest weakness is its inability to detect novel or previously unseen threats. Attackers are constantly changing their tactics, using polymorphic malware that alters its code with each infection, or employing zero-day exploits that haven’t been seen before. In these cases, a signature-based system won’t have a match and will likely miss the attack entirely. It also struggles with sophisticated attacks that might use legitimate tools in malicious ways or obfuscate their malicious code.

Signature-based detection is like having a list of known criminals. It’s great for catching people on the list, but it won’t help much if a new criminal shows up who isn’t on it yet. The attackers know this, so they often try to create new ways to break in that aren’t in the security system’s playbook.

Anomaly-Based Detection Methods

Anomaly-based detection is a pretty neat way to catch bad stuff happening on your network or systems. Instead of looking for specific, known bad patterns like signature-based methods do, it focuses on what’s normal for your environment. Think of it like this: if your system usually hums along quietly, and suddenly it starts making a weird grinding noise, you know something’s up, even if you’ve never heard that specific noise before. That’s essentially what anomaly detection does – it spots deviations from the established baseline behavior.

Establishing Baseline Behavior

To make anomaly detection work, you first have to teach it what’s normal. This involves collecting a lot of data over a period of time to build a profile of typical activity. This isn’t just about network traffic; it can include user login times, the applications people use, the files they access, and even the commands they run. The goal is to create a detailed picture of everyday operations. This baseline needs to be dynamic, though, because normal can change over time as your organization evolves. It’s a bit like getting to know a new neighborhood – you learn the usual sounds and sights, but you also notice when things are out of the ordinary.

Identifying Deviations from Normality

Once you have that baseline, the system starts watching for anything that doesn’t fit. This could be a user logging in from an unusual location at an odd hour, a server suddenly sending out way more data than it normally does, or a process trying to access files it never touches. The real strength of anomaly detection lies in its ability to flag unknown threats that signature-based systems would miss. It’s not about matching a known bad signature; it’s about recognizing that something is just different and potentially suspicious. This approach is particularly useful for catching zero-day exploits or novel malware. However, it’s not perfect. Sometimes, legitimate changes in behavior can trigger alerts, leading to what we call false positives. That’s where tuning comes in.

Tuning Anomaly Detection for Accuracy

Getting anomaly detection just right can be a bit of a balancing act. If it’s too sensitive, you’ll be flooded with alerts for minor, harmless deviations, leading to alert fatigue. If it’s not sensitive enough, you might miss actual threats. So, tuning is key. This involves adjusting the sensitivity of the detection algorithms and refining the baseline to better reflect actual normal operations. It often requires ongoing effort from security analysts who review alerts, identify false positives, and provide feedback to the system. Think of it as training a guard dog – you want it to bark at strangers but not at every falling leaf. Effective tuning helps ensure that the alerts you receive are meaningful and actionable, making your security monitoring more efficient. You can find more information on detective security controls, which complement anomaly detection, at detective security controls.

Here’s a quick look at what might be flagged:

- Unusual Login Activity: Access from new geographic locations, at odd times, or with a high rate of failed attempts.

- Network Traffic Spikes: A server suddenly sending or receiving significantly more data than its historical average.

- Abnormal Process Behavior: A system process attempting to access sensitive files or execute commands outside its normal scope.

- Changes in Application Usage: Users suddenly employing applications or features they’ve never used before.

Anomaly detection works by establishing a profile of normal system or network behavior and then flagging any activity that significantly deviates from this established norm. This makes it effective against novel threats but requires careful tuning to minimize false positives.

Behavioral Analysis in Detection

User and Entity Behavior Analytics (UEBA)

User and Entity Behavior Analytics, or UEBA, is all about watching what users and systems are doing and then spotting when things get weird. Instead of just looking for known bad stuff, UEBA tries to figure out what’s normal for a specific user or device. It builds a picture of typical activity over time. When something pops up that doesn’t fit that picture – like a user suddenly accessing files they never touch, or a server suddenly making a ton of outbound connections – it flags it. This is super helpful for catching things like compromised accounts, where an attacker might be using a legitimate login but acting totally out of character. It also helps spot insider threats, where someone with access might be doing something they shouldn’t.

Monitoring Authentication and Access Patterns

When we talk about monitoring authentication and access, we’re really looking at how people and systems get into places they shouldn’t. This involves watching login attempts, where they’re coming from, and when they’re happening. Think about it: if someone usually logs in from New York at 9 AM and suddenly logs in from Tokyo at 3 AM, that’s a big red flag. We also look at how often people fail to log in – a lot of failed attempts might mean someone is trying to guess a password. Beyond just logging in, we watch what happens after access is granted. Are they accessing resources they normally don’t? Are they trying to get to more sensitive areas? Keeping an eye on these patterns helps us catch unauthorized access early. It’s a key part of understanding cyber espionage tactics, where attackers often try to blend in by using stolen credentials.

Detecting Privilege Escalation

Privilege escalation is a fancy term for when a regular user or program manages to get higher-level permissions than they’re supposed to have. Attackers love this because it lets them do more damage, like install malware or steal sensitive data. Behavioral analysis helps here by looking for unusual activity that suggests this is happening. For example, if a standard user account suddenly starts running commands that require administrator rights, that’s a big warning sign. We might see attempts to exploit known vulnerabilities to gain these higher privileges, or perhaps the abuse of legitimate tools that are already built into the system. Detecting these shifts in behavior, even if the initial access was legitimate, is vital for stopping an attack before it gets out of hand. It’s about noticing the change in behavior, not just the initial access.

Here’s a quick look at what we monitor:

- Login Anomalies: Impossible travel, unusual times, high failure rates.

- Access Violations: Attempts to access unauthorized files or systems.

- Command Execution: Running commands typically reserved for administrators.

- Process Behavior: Unexpected processes or modifications to system files.

Detecting privilege escalation is like noticing someone who normally just walks through the front door suddenly trying to pick the lock on the CEO’s office. It’s a significant change in behavior that warrants immediate attention.

Cloud Environment Detection

When your organization moves to the cloud, the way you detect intrusions needs to change too. It’s not just about servers anymore; it’s about virtual machines, containers, serverless functions, and a whole lot of APIs. Think of it like moving from a single house to a sprawling apartment complex – you need to watch every door, window, and shared hallway.

Cloud Workload Monitoring

This is about keeping an eye on what’s actually running in your cloud. We’re talking about virtual machines, containers, and even those tiny serverless functions. The goal is to spot anything unusual, like a workload suddenly trying to access data it shouldn’t, or a container behaving like it’s been infected. It’s like having security cameras inside each apartment unit, not just at the main entrance.

- Spotting rogue processes: Looking for software running that shouldn’t be there.

- Resource abuse: Detecting if a workload is using way more CPU or memory than normal, which could signal an attack.

- Unusual network connections: Monitoring if a workload is trying to talk to suspicious external servers.

Identity and Access Management Monitoring

In the cloud, your identity is often the new perimeter. Who is accessing what, and when? This is super important. We need to watch login attempts, see if someone is trying to jump between accounts, or if an account suddenly has way more permissions than it should. Think of it as monitoring who has keys to which apartments and if they’re using them correctly.

- Suspicious login activity: Flagging logins from weird locations or at odd hours.

- Privilege escalation: Detecting when a user or service account tries to gain higher access levels.

- Abnormal access patterns: Spotting when an account starts accessing files or services it never touched before.

Cloud Configuration Auditing

Misconfigurations are a huge problem in the cloud. It’s like leaving a window unlocked in your apartment building – it’s an easy way for trouble to get in. This means regularly checking that your cloud services are set up securely. Are storage buckets public when they shouldn’t be? Are security groups too open? This is about making sure the building’s security settings are all correct.

Regularly auditing cloud configurations helps prevent common breaches caused by simple mistakes. It’s a proactive step that catches many potential issues before they can be exploited.

Here’s a quick look at what we check:

- Storage bucket permissions: Ensuring sensitive data isn’t exposed publicly.

- Network security group rules: Verifying that only necessary traffic is allowed in and out.

- Identity and Access Management (IAM) policies: Making sure roles and permissions follow the principle of least privilege.

Integration with Threat Intelligence

Leveraging Indicators of Compromise

Threat intelligence is all about using information about potential or ongoing cyber threats to make your defenses better. One of the most direct ways to do this is by using Indicators of Compromise (IoCs). These are basically digital clues, like specific IP addresses, file hashes, or domain names, that have been seen in connection with malicious activity. When your security systems can check their logs and network traffic against a list of known IoCs, they can flag suspicious activity much faster. It’s like having a list of known troublemakers’ fingerprints to compare against at the scene of a potential crime. This helps systems identify threats that might otherwise slip by unnoticed.

Contextualizing Threat Data

Just having a list of IoCs isn’t always enough, though. That’s where contextualizing threat data comes in. It means understanding why an IoC is bad and what kind of attack it’s associated with. For example, knowing that a specific IP address is linked to a known phishing campaign is more useful than just knowing it’s a bad IP. This context helps security teams figure out the potential impact of a detected event and how serious it is. It also helps in figuring out the attacker’s likely goals and methods, which can guide the response. Without context, you might get a lot of alerts that are hard to sort through.

Automating Detection with Intelligence Feeds

Manually checking threat intelligence feeds against your systems would be a huge job. That’s why automation is key. Most modern security tools, like SIEMs and EDRs, can connect to threat intelligence feeds automatically. These feeds are updated constantly with new information about threats. When new IoCs or threat patterns are added to the feed, the security tools can ingest this data and update their detection rules. This means your defenses are always getting smarter and more up-to-date without constant manual intervention. It’s a way to keep your detection capabilities sharp against the ever-changing threat landscape.

Here’s a quick look at how threat intelligence feeds can be integrated:

- Data Source: Threat intelligence platforms or feeds (commercial, open-source, government).

- Integration Method: APIs, STIX/TAXII protocols, or manual uploads.

- Detection Systems: SIEM, IDS/IPS, EDR, firewalls, proxy servers.

- Action: Correlate incoming data with intelligence, generate alerts, block malicious traffic.

Integrating threat intelligence isn’t just about collecting data; it’s about making that data actionable. The goal is to turn raw threat information into specific, timely alerts and automated responses that protect your organization.

Alerting and Incident Response

Once an intrusion detection system flags something suspicious, the next step is figuring out what to do about it. This is where alerting and incident response come into play. It’s not just about getting a notification; it’s about making sure that notification is actually useful and that the right people know about it.

Prioritizing Security Alerts

We get a lot of alerts from our security tools, and honestly, not all of them are emergencies. Some are just noise, others might be minor issues, and a few are genuine threats that need immediate attention. The key is to sort through them effectively. This means setting up our systems so that the most critical alerts, the ones that indicate a real compromise or a significant risk, bubble up to the top. We need to look at things like the severity of the potential threat, how much it could impact our operations, and how likely it is that something bad is actually happening. Without good prioritization, our security teams can get overwhelmed, and real problems might get missed.

Reducing Alert Fatigue

This ties right into prioritization. If you’re constantly bombarded with alerts, many of which turn out to be false alarms or low-priority issues, you start to tune them out. It’s like the boy who cried wolf. This ‘alert fatigue’ is a serious problem because it makes it harder to spot the real threats when they appear. We need to fine-tune our detection rules and systems. This involves regularly reviewing the alerts we receive, identifying patterns that lead to false positives, and adjusting the sensitivity or logic of our detection tools. It’s an ongoing process, but it’s vital for keeping our security team sharp and responsive.

Contextual Information for Investigations

When an alert does come in, and it looks like it might be serious, the security team needs more than just a basic notification. They need context. What system is affected? What user account is involved? What kind of activity is being flagged? What other related events have occurred recently? Providing this kind of background information right in the alert makes it much faster and easier for the team to investigate. Instead of having to hunt down all the pieces of the puzzle, they can start analyzing the situation more effectively. This speeds up the whole incident response process, which is super important when you’re trying to stop an attack before it causes major damage.

Here’s a quick look at what good alert context might include:

- Affected Asset: Details about the server, workstation, or application involved.

- User Information: If a user account is implicated, information about that user and their typical activity.

- Timeline of Events: A brief history of related suspicious activities leading up to the alert.

- Threat Intelligence: Any known indicators of compromise (IOCs) associated with the detected activity.

- Severity Score: A clear indication of how critical the alert is considered.

Effective alerting and incident response aren’t just about having the right technology; they’re about making that technology work for us. It means ensuring that the signals we get are clear, prioritized, and packed with the information needed to act quickly and decisively. Without this, even the best detection systems can fall short when it matters most.

Advanced Detection Techniques

Machine Learning in Intrusion Detection

Machine learning (ML) is really changing the game when it comes to spotting intrusions. Instead of just relying on pre-defined rules or signatures, ML models can learn what normal looks like for your specific network or system. They do this by analyzing tons of data – think network traffic, user activity, system logs – and building a baseline. Once that baseline is established, the ML model can flag anything that significantly deviates from it. This is super helpful for catching new, unknown threats that signature-based systems would miss. It’s not perfect, of course; sometimes legitimate but unusual activity can trigger an alert, leading to what we call false positives. Getting the tuning just right is key.

AI-Driven Attack Detection

Artificial intelligence (AI) takes ML a step further. AI-driven systems can not only detect anomalies but also start to understand the intent behind suspicious behavior. Think about it: an AI might notice a series of actions that, individually, don’t look too alarming, but when strung together, they paint a picture of a sophisticated attack. AI can also help automate the analysis of massive datasets, something that would be impossible for human analysts to do in a timely manner. This is especially important with the rise of AI-driven attacks themselves, which can be faster and more complex. AI helps us fight fire with fire, so to speak.

Honeypot and Deception Technologies

These are pretty cool. Honeypots are essentially decoys – systems or data set up to look like valuable targets but are actually designed to lure attackers. When an attacker interacts with a honeypot, it’s a clear sign of malicious intent, and security teams get an immediate alert. Deception technologies take this a bit further by scattering fake credentials, network shares, or other lures throughout the environment. If an attacker tries to access these decoys, it signals that they’ve already bypassed some of your defenses and are moving laterally. It’s a way to catch attackers in the act and learn about their methods. It’s a bit like setting a trap to see who walks into it. This can provide really useful information about attacker tactics, techniques, and procedures (TTPs) without putting your actual production systems at risk. It’s a proactive way to gather intelligence on threats. The goal is to make the environment so noisy with fake targets that any real malicious activity stands out clearly. This approach can be particularly effective against advanced persistent threats (APTs) that often move slowly and deliberately through a network.

The effectiveness of these advanced techniques hinges on continuous monitoring and the ability to quickly adapt to new threat patterns. Relying solely on one method is rarely sufficient in today’s complex threat landscape. Integrating multiple detection strategies provides a more robust defense.

Here’s a quick look at how these techniques compare:

| Technique | Primary Focus | Strengths | Weaknesses |

|---|---|---|---|

| Machine Learning (ML) | Anomaly detection, pattern recognition | Detects unknown threats, adapts to changes | Can have false positives, requires tuning |

| AI-Driven Detection | Intent analysis, complex pattern correlation | Understands attack sequences, automates analysis | High complexity, resource-intensive |

| Honeypots & Deception Tech. | Luring attackers, early warning, TTP gathering | High-fidelity alerts, gathers threat intel | Can be complex to deploy, requires maintenance |

These advanced methods are becoming increasingly important as traditional security controls face more sophisticated adversaries. They offer a way to detect threats that might otherwise go unnoticed, allowing for quicker containment and eradication of security incidents.

Putting It All Together

So, we’ve talked about a lot of different ways to spot trouble, from watching network traffic to checking user behavior and keeping an eye on cloud stuff. It’s not just one thing that works; it’s a mix of methods, like using known patterns and also looking for weird, unexpected activity. The main idea is to have eyes everywhere, collecting information and making sense of it. This helps catch things that sneak past the initial defenses. It’s a constant effort, really, always adjusting and learning to stay ahead of whatever might be coming next.

Frequently Asked Questions

What is an Intrusion Detection System (IDS)?

An Intrusion Detection System (IDS) is a tool that watches computers or networks for signs of suspicious or harmful activity. It alerts security teams if it finds anything unusual that could mean someone is trying to break in or attack the system.

How does a network-based IDS work?

A network-based IDS looks at data moving across a network. It checks for strange traffic, bad protocols, or signs of attacks. If it finds something odd, it sends an alert so someone can check it out.

What is the difference between signature-based and anomaly-based detection?

Signature-based detection looks for patterns that match known threats, kind of like looking for a fingerprint. Anomaly-based detection looks for anything that doesn’t fit normal behavior, which can help spot new or unknown threats.

Why is monitoring user behavior important in intrusion detection?

Watching how users and devices act helps spot when someone is doing something they shouldn’t, like logging in at weird times or trying to access files they usually don’t. This can catch hackers or insiders trying to do harm.

How do intrusion detection systems help in cloud environments?

In the cloud, IDS tools watch for strange activity, like odd logins, changes to settings, or weird use of cloud services. They help stop account takeovers and spot mistakes in security settings.

What is alert fatigue and how can it be avoided?

Alert fatigue happens when there are too many security warnings, making it hard to tell what’s important. Good IDS systems sort and rank alerts by how serious they are, so security teams can focus on the real problems first.

How does threat intelligence improve intrusion detection?

Threat intelligence gives IDS systems up-to-date info about new attacks, bad websites, and hacker tricks. This helps the system spot threats faster and more accurately by knowing what to look for.

Can machine learning help detect intrusions?

Yes, machine learning can help IDS systems learn what normal behavior looks like and spot new or sneaky attacks that don’t match old patterns. This makes detection smarter and faster over time.